The word ‘penalty’ gets banded about so much in the SEO community that it’s sometimes hard to keep up with which practices actually cause a penalty and even which ones will damage performance.

That’s why we decided to compile all the general SEO issues and penalties into one post so that you can easily refer back and tell the difference when you need to.

Penalties or applied negative effect

Google chooses to apply a negative effect which otherwise wouldn’t exist, because a site has not adhered to their guidelines.

1. Manipulating backlinks

As Google says in its manual action alert for unnatural links, ‘a pattern of unnatural artificial, deceptive, or manipulative links’ pointing to one site might incur a penalty.

2. Cloaking/redirects for Googlebot

Showing different content/URLs to users and search engines (known as cloaking), or redirecting Googlebot and not users is a violation of Google’s Webmaster Guidelines and will mean you’re slapped with a penalty.

This includes:

- Text and links that only shown to search engines

- Showing HTML to search engines, but showing only Flash/images to users.

However, Google’s John Mueller did mention an exception in a recent Webmaster Hangout: although it’s technically cloaking, John said he doesn’t find it ‘that problematic’ to redirect Googlebot from URLs with tracking parameters to canonical URLs, but allow users not to be redirected, so they can be tracked in analytics. Watch John discussing this at the 53:17 mark of the Webmaster Hangout here.

3. Doorway pages

Creating several doorway pages specifically designed to rank in search results, but that lead to the same page, is considered spam by Google.

4. Thin content

Google’s Panda ranking update was designed to stop sites with poor-quality content appearing in search results. Google looks for sites with a high proportion of navigation/image/dynamic elements and not enough copy, too many blank pages, ad-stuffing and technical glitches that hinder a user’s experience.

5. Auto-generated content

Most content that has been generated automatically will be in violation of Google’s Webmaster Guidelines. This can include automatically translated content, scraped content from Atom/RSS feeds or search results, or content that has been stitched together from other sources without adding any unique value.

6. Irrelevant content and markup

In a recent Google Webmaster Hangout, John Mueller said “From a policy point of view, we do want to have the content visible on the pages when it’s marked-up, so that’s obviously easier with the existing schema markup, microformats etc, and harder to check with JSON-LD because the JSON-LD markup is essentially JavaScript that’s separate from the HTML on the page.”

The implication here is that you might be penalized if you’re trying to trick Google with markup that’s not visible on the page.

7. Undeclared advertorial

As Matt Cutts commented in 2013, links in advertorials (advertising content displayed as editorial, such as a blog post or news article) should be nofollowed so that they don’t pass PageRank, and the advertorial itself should be clearly marked as such.

Undeclared advertorials can lead to a site (and the sites that linked to it) being penalized.

8. Site performance – severe

Issues with site performance that severely hinder a user’s experience of your site could lead to the site being removed from search results, effectively the same as a penalty. Help speed up your site by adhering to Google’s PageSpeed Insights Rules.

9. Hacked spam (including malware)

In October 2015 Google rolled out an update that deals with hacked spam ‘aggressively’ in search results. Essentially hacked spam will no longer show in search results.

10. HTTP-only

In August 2014 Google announced they would give a small ranking advantage to sites that used HTTPS. However, this is only a slight signal at the moment. Find out more at our HTTPS configuration guide.

11. Mobile incompatibility

Google’s mobile-friendly update means that sites that don’t meet their mobile-friendly requirements won’t get a boost in search results.

12. Interstitials

As of November 1st 2015, app interstitial ads that cover a ‘significant amount of content’ on a page will be not be considered mobile-friendly and will not rank as well as mobile-friendly pages.

SEO issues (won’t cause a penalty)

These issues may have a negative effect on engagement, or Google might index the content differently to how you would prefer. But Google tries to mitigate the impact so, while it’s a good idea to sort these issues where possible, they shouldn’t incur a Google penalty.

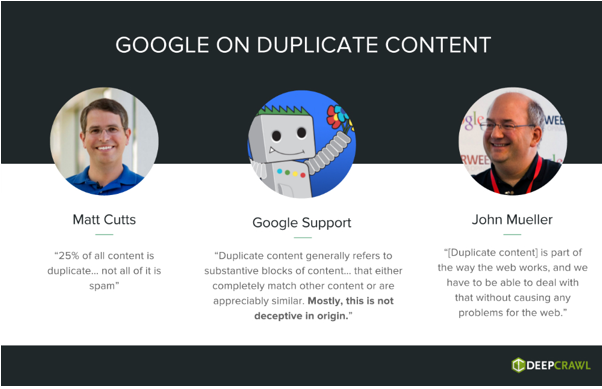

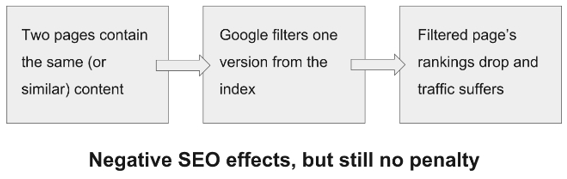

1. Duplicate content

There is no duplicate content penalty, but Google may try to filter duplicates from the index by choosing one primary version of the content (even if this is not specified in a canonical tag or redirect) and favour this one version in search results.

Duplication across different sites can cause confusion in results about which version of the same content should be indexed, which can lead to an assumption that there is a manual penalty at play:

Duplicate content can include (but is not limited to):

- Domain duplication: www/non-www and HTTP/HTTPS

- The same URL with different parameters

- Duplicate product descriptions on different URLs/sites

- Separate mobile-friendly URLs, printer-friendly URLs etc

- Press release content used on several news publications

- Affiliate content

- Syndicated content

- Tag and category pages

- Localized content (similar content containing information tailored to users in a specific region)

Google does not regard the following as duplicate content, so will not usually try to ‘fold’ pages into one.

- Same content translated into multiple languages *

- Different pages with the same meta title and meta description *

- Equivalent web and app content *

To help mitigate the impact of duplicate content, you should use canonical tags, hreflang, 301 redirects and add value to existing content where appropriate. Read more on our guide to the 7 duplicate content issues you need to fix.

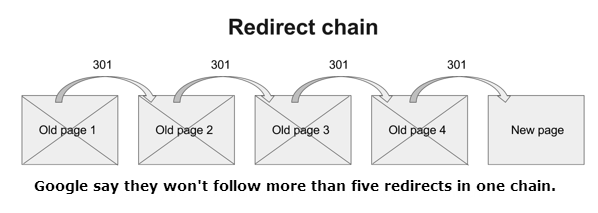

2. Redirect chains

Redirect chains (one page redirects to another, which then redirects to a third, for example) will slow Googlebot’s progress through your site (which then reduces crawl efficiency) and also increases load times for users.

Users might give up if it takes longer than they expect to get to the destination page, which not only affects their loyalty to your brand but also engagement metrics: if they have to wait for a page to load then they’re more likely to bounce back to search results. The latter could in turn affect your rankings if it becomes a significant problem.

3. 500 errors

Returning 500 errors won’t cause a penalty, but they might impact your rankings. In a recent Google Webmaster Hangout, Google’s John Mueller explained that if Googlebot might take server errors to mean that it is crawling your website too quickly, resulting in a slower crawl rate. This lower rate might mean that Google will see less of your content and might index new content later than it normally would.

If the site persistently sends 500 errors back to Google, then they will be treated like 404s and the URLs returning the error will be dropped from search results.

4. Lots of 404s / not redirected URLs

Again, there is no penalty for returning too many 404 errors or leaving expired pages without redirects. If Googlebot finds a page that returns a 404, that page will be removed from Google’s index; it can be reactivated later and will be recrawled.

However, if users land on 404’d pages regularly then it could affect engagement, so it’s best to either create an engaging 404 page that might allow users to find what they need, or 301 redirect to an equivalent alternative.

5. Incorrect canonical tags

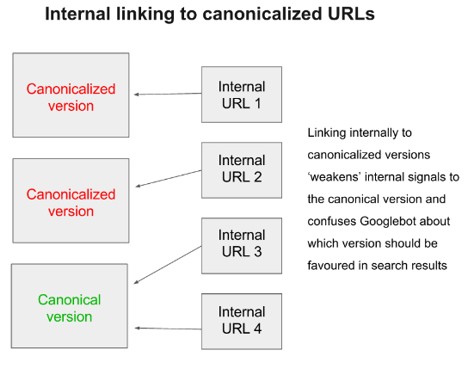

While Google recommend only using canonical URLs within internal pages, there are no penalties for linking to canonicalized URLs. However, linking to different versions ‘weakens’ the signals pointing at the primary version of your page and might mean that Google will favour the wrong version of your content in search results.

Similarly, there are no penalties for linking to the wrong version in canonical tags, but this makes it more difficult for Googlebot to figure out which version of the content you want to be indexed, and the wrong version might appear in search results.

Additionally, Google might ignore your canonical tags if you canonicalize many pages to a dissimilar single URL.

6. Redirect many URLs to one

As stated by John Mueller in the Google Webmaster Hangout on 16th October, too many URLs redirecting to a single unrelated URL (such as the homepage) may be treated as a soft 404 instead, as having lots of old content redirected to a page that is not a direct equivalent is confusing for users and Google can’t “equate” the pages to anything.

7. Site performance (minor)

Google is all about engagement and they specifically recommend optimizing the speed of your site in their Webmaster Guidelines, but minor issues with load times shouldn’t affect rankings and performance.

Any improvements you can make to help users on their way through the site (reducing load times as much as possible, for example) will help engagement and brand loyalty, however.

8. Invalid HTML

As mentioned by John Mueller, Google will still try to understand a page containing invalid HTML and how they can rank it. Ideally everything on the page would be valid so that Google can understand the content, but they understand that ‘in the real world most pages aren’t valid HTML’, so Googlebot has to be able to deal with that.

However a sufficiently broken HTML page may still be impossible to read, in which case the SEO performance may suffer.

9. Redirects/404s in Sitemaps

You can submit an XML Sitemap with expired pages (that return a 404) to help get them removed from the index quicker. It’s best to put them into a separate Sitemap so you can see them separately to other indexable URLs.

You should remove them once they have been removed from the index to make sure index counts reflect the real state of your site and to avoid continual crawling of the URLs.

10. Blocked/unblocked JavaScript files

Google recommend allowing all of your site’s assets to be crawled (to allow Googlebot to render a page like a browser would, and check for mobile compatibility) but there is no penalty for allowing too many/few of these files.

If they are disallowed, it might mean that Googlebot won’t be able to tell if your site is mobile-friendly and might not be able to understand your page if you use JavaScript to display content.

11. Only using HTTP2

Google can’t crawl sites that only work on HTTP2 at the moment. John Mueller has mentioned that they are working on this and he suspects it will be ready around the end of the year.

General SEO practices (won’t cause a penalty, not issues either)

12. Geographic redirects

Matt Cutts discussed serving different content to users based on their IP location in 2009 and explained the difference between this and cloaking.

13. Nofollowing links

In his Webmaster Hangout on 25th September, Google’s John Mueller stated: “It is not something that we would say that there is any SEO advantage of linking to someone else’s site.”

From John’s comment we can safely assume that if linking externally doesn’t have any SEO advantage, then nofollowing them all won’t have any negative impact either (and there’s definitely no penalty).

Want More Like This?

We hope that you’ve found this post useful in learning more about issues Google will penalise you for.

If you’re interested in keeping up with Google’s latest updates and best practice recommendations then why not loop yourself in to our emails?