Lumar allows users to crawl their entire website, including subdomains. However, crawling subdomains requires advanced settings to be configured in order to allow Lumar to access URLs on subdomains.

In this guide, we will take you through how to set up and configure our advanced settings to crawl either all or specific subdomains.

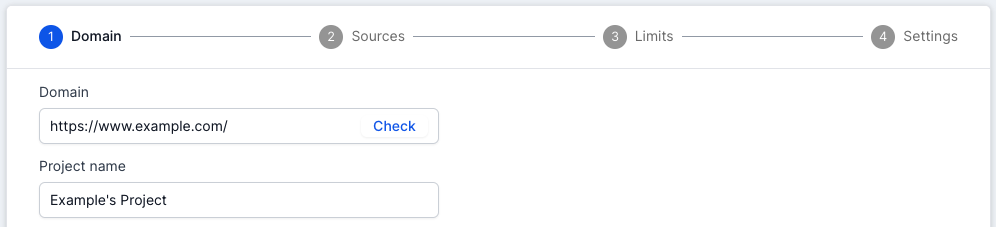

Select the Primary Domain

First select the primary domain in step 1 of the project settings.

By default, the domain (www or non-www) listed as the primary domain defines the domain scope and crawl start URL.

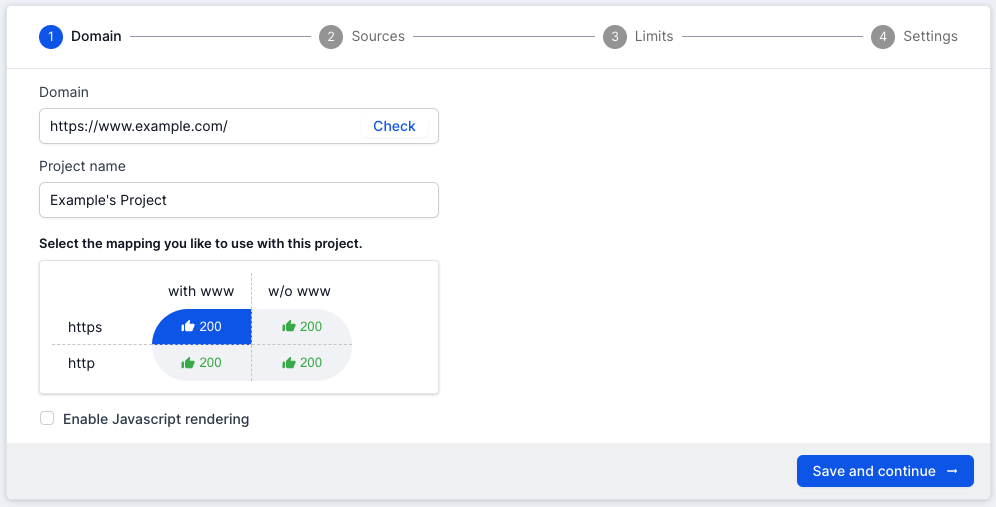

Check your domain mappings

Once you have added your primary domain you should use the “Check” domain mapping tool. The “Check” tool quickly checks the HTTP status codes for different variations of the URL in the primary domain.

Essentially this allows users to quickly see if the URL they inputted into the primary domain is the canonical version, or if there are other duplicate versions of the URL which could also be crawled by Lumar.

- 2** status code: The URL variations are live, and the contents of the page can be accessed by Lumar.

- 3** status code: The URL variations are redirected, and the contents of the page can’t be accessed by Lumar.

- 4** status code: The URL variations are not live, and the contents of the page can’t be accessed by Lumar.

If the URL variations are live (200 status code) then we recommend investigating to see if they need to be crawled by Lumar. If they need to be crawled, then follow the next steps.

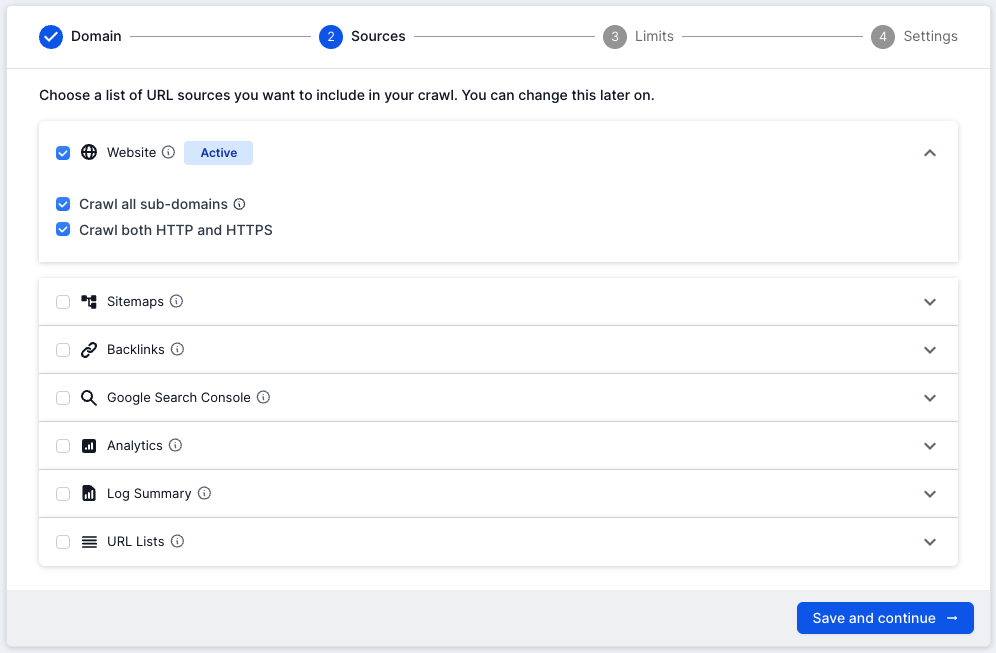

Allowing Lumar to crawl all subdomains

If you select the ‘Crawl subdomains’ and ‘Crawl both HTTP and HTTPS’ options within the Website crawl source in step 2, Lumar will automatically include all URLs (both HTTP and HTTPS URLs) on any subdomains of your primary domain which are discovered during the crawl.

For example, if this was selected for the primary domain https://www.lumar.com/ then all HTTP and HTTPS URLs found in the crawl for the following subdomains could be crawled:

- https://example.lumar.com/

- https://blog.lumar.com/

- https://m.lumar.com/

Lumar would not crawl any other subdomains which were not under the primary domain, for example:

- https://amp.theguardian.com/

- https://privacy.microsoft.com/

The only issue with allowing all subdomains to be crawled, is you could end up using URL credits on pages which do not need to be crawled.

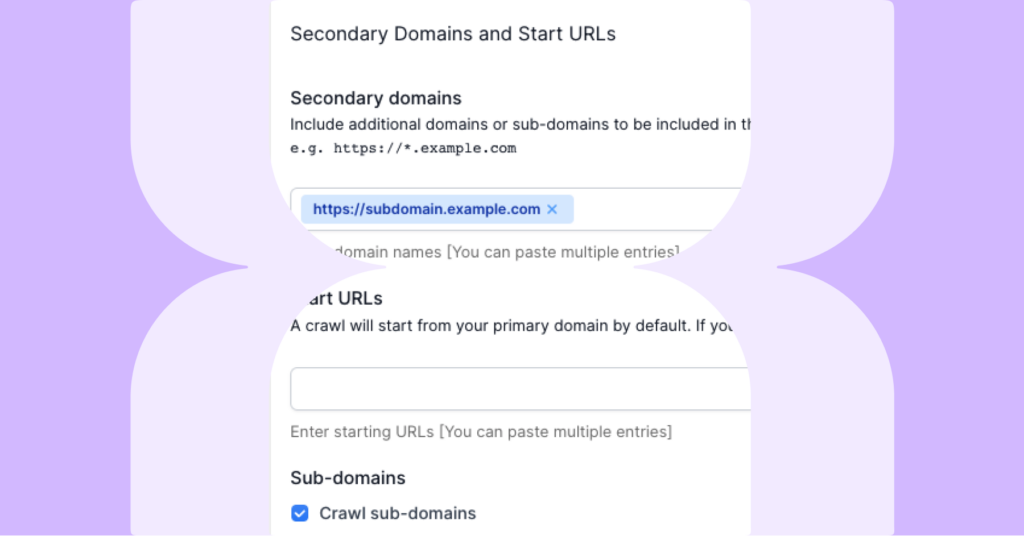

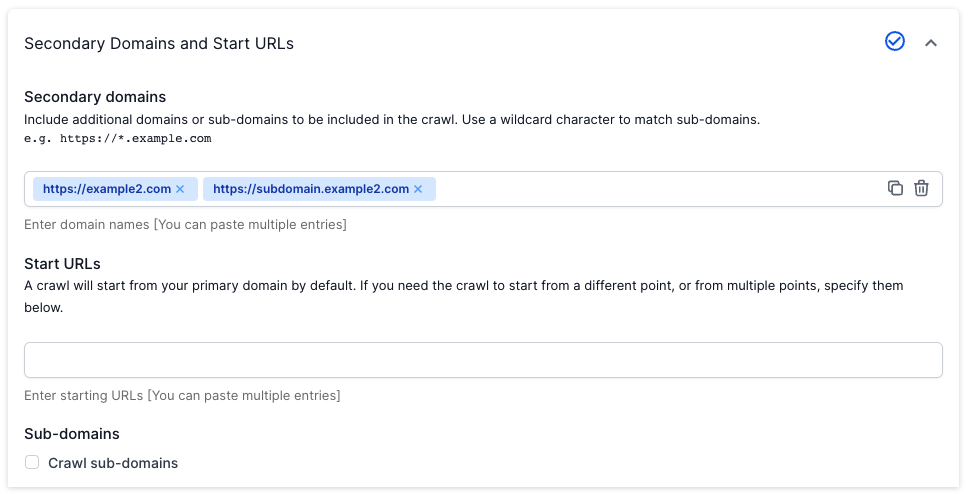

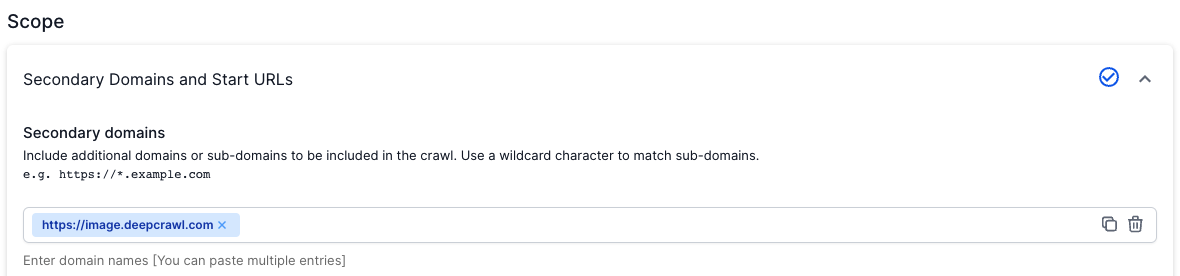

If you want Lumar to only crawl specific subdomain URLs (HTTP or HTTPS) then you will need to use the Secondary Domains feature in step 4 of the project settings.

Add secondary domains

To include specific subdomains in your crawl, add them to the ‘Secondary Domains’ section under Scope within Advanced Settings.

You can now enter as many domains or subdomains as you like. For example, your primary domain (https://www.example.com/) might include content which is located on the blog (https://blog.example.com ) and documentation subdomains (https://docs.example.com).

If these subdomains are linked to from the primary domain, then using the secondary domain feature allows our crawler to access the URLs on the subdomains. This makes sure that Lumar can crawl and process unique metrics (e.g. DeepRank) on the entire website not just the primary domain.

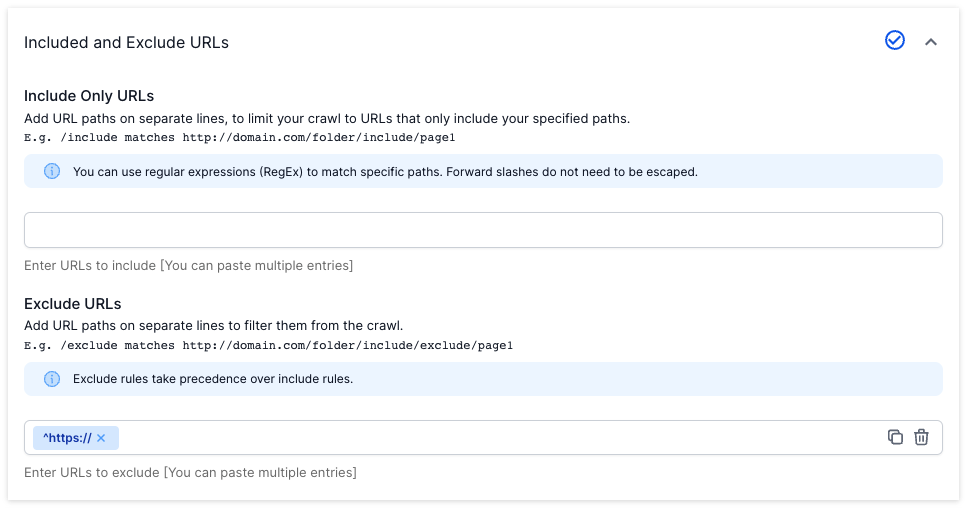

Restricting crawls on subdomains

To restrict the URLs on subdomains that Lumar crawls you can also use the ‘Included Only URLs’ and ‘Excluded URLs’ filters in Advanced Settings (e.g. exclude parameter URLs on marketing subdomain).

For further information on positive and negative URL restrictions in your crawl set up, check out our Restricting a Crawl guide.

Frequently Asked Questions

Do I need to add a separate m. subdomain to the secondary domains feature?

If you want to see if your mobile and desktop websites are technically excellent, we recommend using the Mobile Site feature in the advanced project settings.

For more information on how to use this feature please read our guide on how to crawl a mobile website with Lumar.

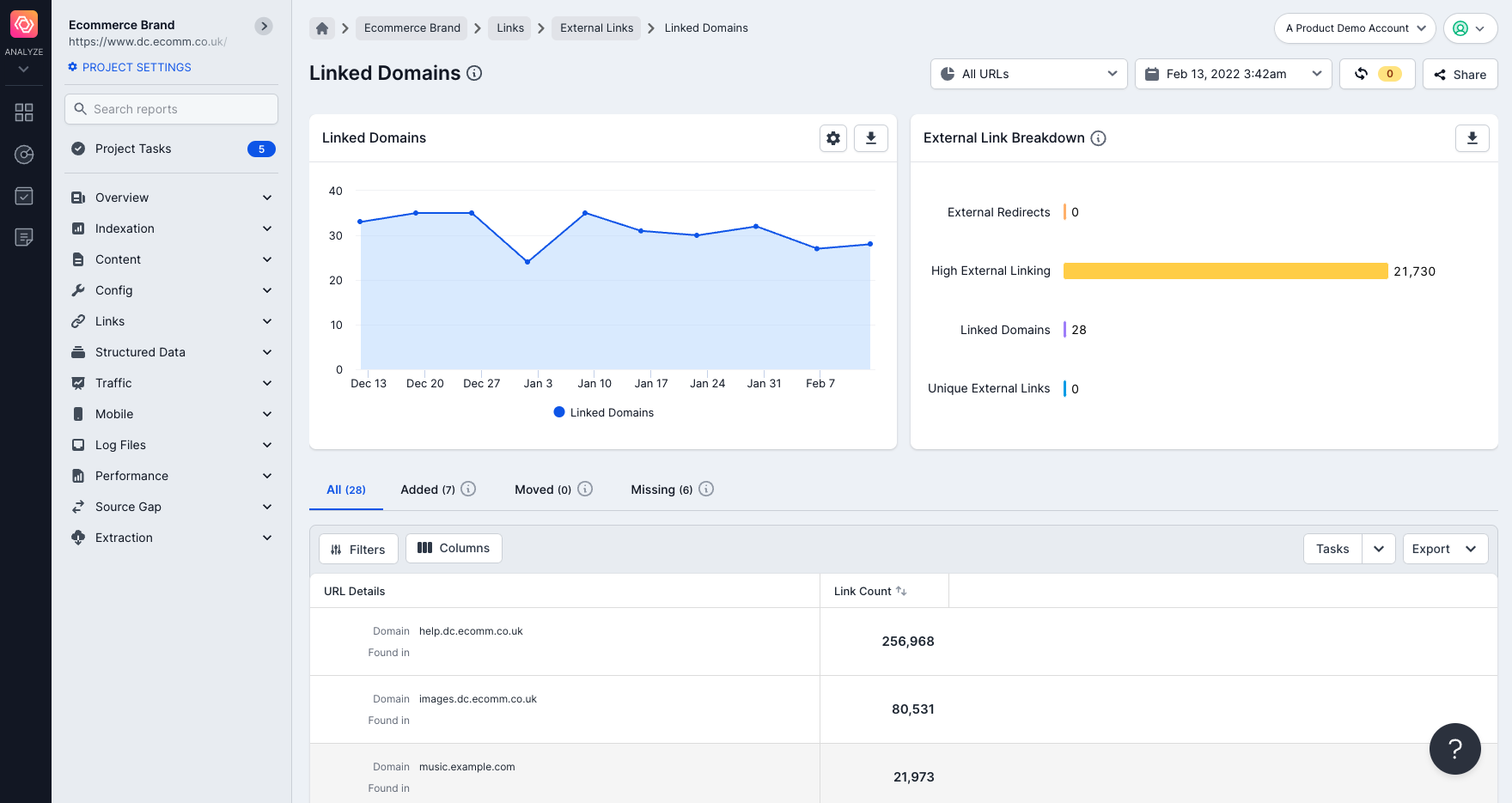

How do I find a list of subdomains which were discovered in a crawl?

A list of subdomains can be found in the “Linked Domains” report.

This will show you all the external domains and subdomains which were discovered to have links pointing to them on crawled URLs.

Use this report to view which subdomains should be added to the crawl to get a better picture of the entire root domain.

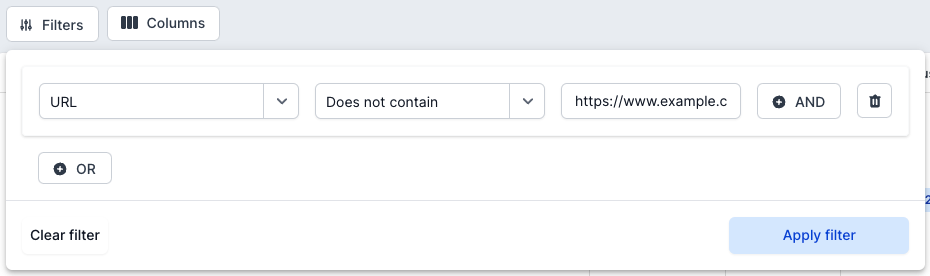

How do I find a list of crawled URLs from subdomains in Lumar?

The easiest way to see URLs from subdomains that were crawled is to use a filter.

Go to the ‘All Pages’ report and use the filter to exclude all URLs on the primary domain:

This can be done for both www and non-www primary domains. This filter will show you all subdomain pages found in the crawl.

How do I crawl images if they are on a subdomain?

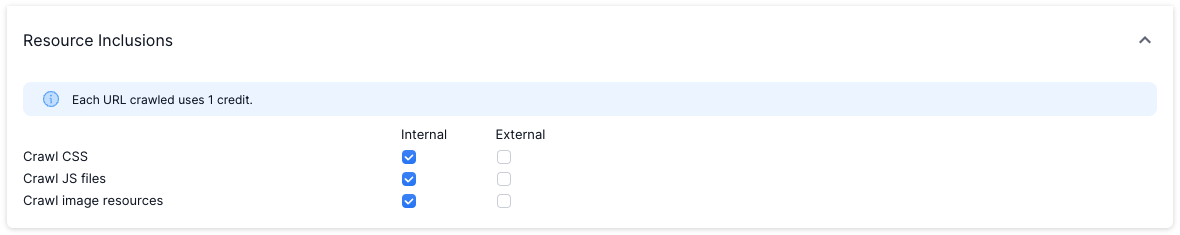

Enter the specific image subdomain into the Secondary Domain feature in step 4 or tick the “Crawl sub-domains” checkbox (this will allow all subdomains to be crawled under the primary domain).

Also, make sure that the image resources are ticked under the “Resource Inclusions” settings.

Will links found on the subdomains be counted as internal?

Yes, all subdomains included in the Secondary Domains or found as part of the primary domain will count as internal links.

Are subdomains counted in the DeepRank score?

Yes. Any internal links pointing towards an internal HTML page will be counted in the DeepRank score of a page.

Will subdomains count towards the number of credits I can use?

Yes. When Lumar fetches any URL resource (HTML, CSS, JavaScript, etc.) it uses a URL credit.

Any questions about crawling subdomains?

If you have any further questions about Google Analytics and Lumar don’t hesitate to get in touch.