Lumar can be used to generate XML Sitemaps for pages that have been discovered and crawled.

Follow these instructions to generate XML Sitemaps in Lumar.

Selecting Which Pages to Include

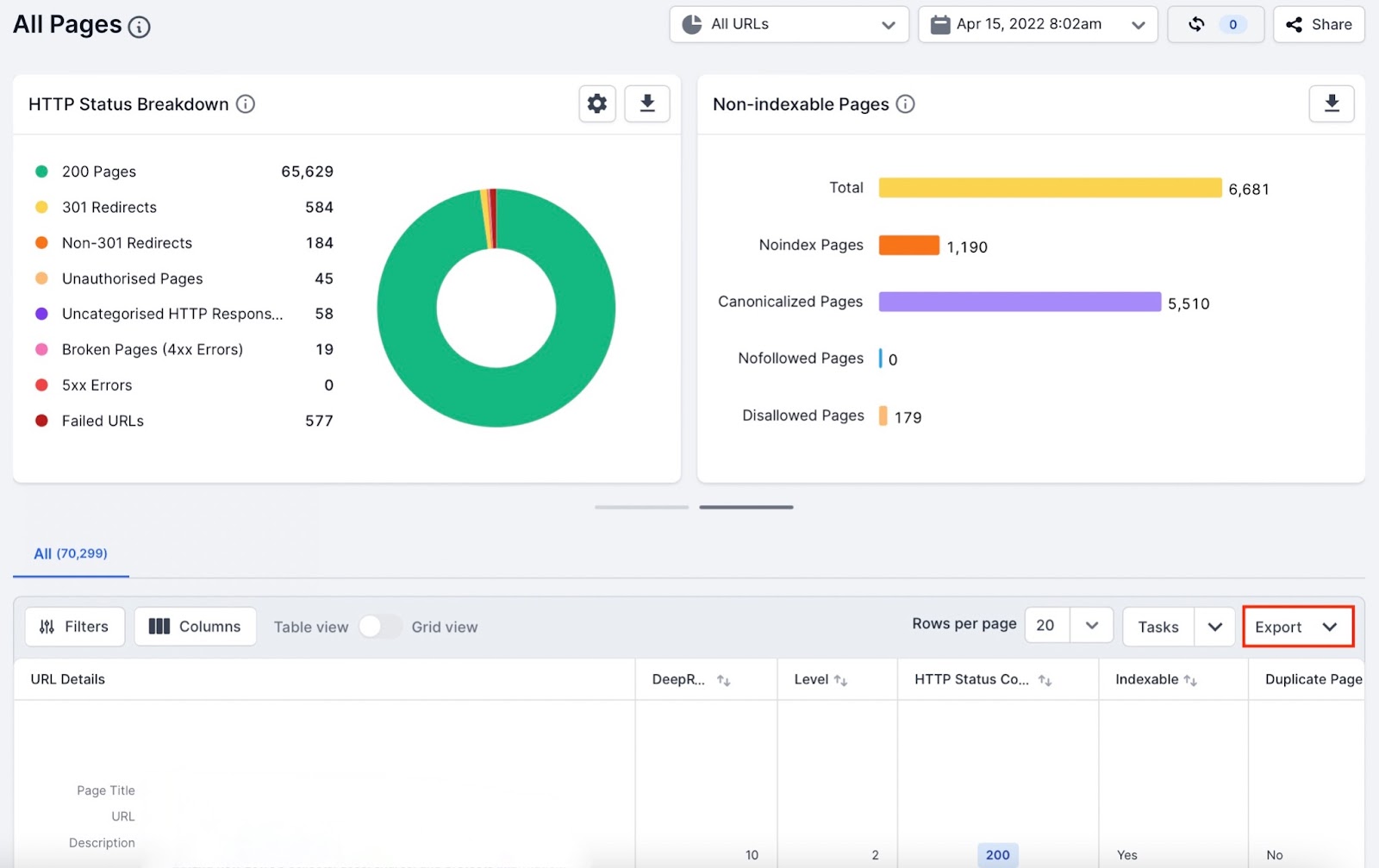

Navigate to the crawl project that the XML Sitemap will be generated from.

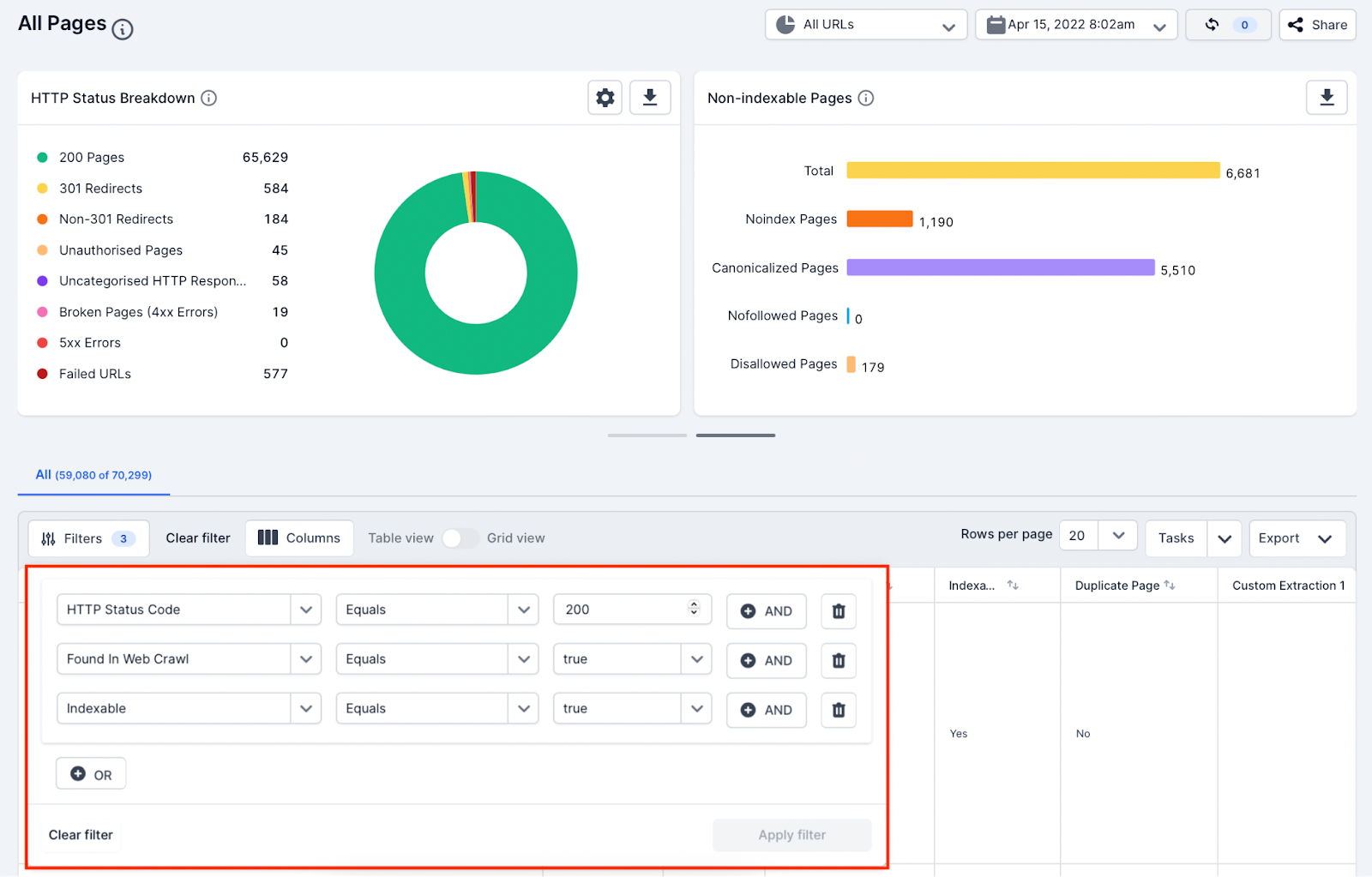

Go to the All Pages report and apply filters to get a list of pages you want to include in the XML Sitemap.

For example, the following filters would segment URLs which were live, indexable and only found in the web crawl. When filtering, please be aware of the of the current best practice guidelines:

- Only include URLs which can be crawled and indexed by search engines.

- Only include the canonical URL of a page.

- Do not include broken or redirected URLs.

- Do not include canonicalized or noindex pages.

Selecting pages is important if you are creating a Sitemap to help improve the crawling and indexing of your website.

Breaking Down Large Sitemaps

A Sitemap file must have no more than 50,000 URLs or be larger than 50MB in size. When selecting URLs to be included in a Sitemap, please make sure that these size limits are not exceeded. Break up large groups of pages into smaller Sitemaps if necessary.

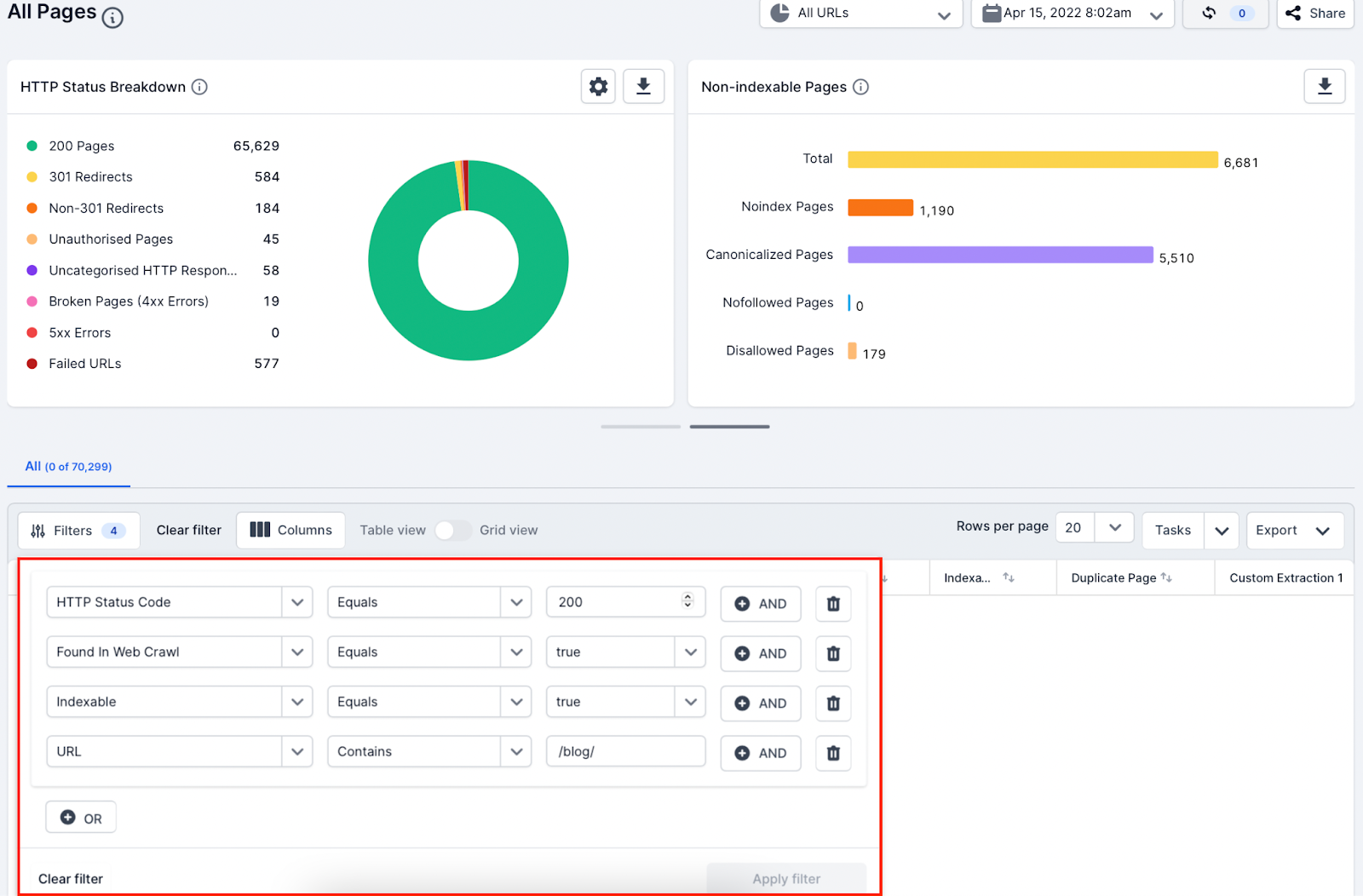

For example, rather than creating a large website XML Sitemap in just one file, break down the Sitemaps by website directories (URL):

https://www.example.com/sitemap_bathrooms.xml

https://www.example.com/sitemap_kitchens.xml

https://www.example.com/sitemap_bedrooms.xml

https://www.example.com/sitemap_garden.xml

This can be done by using the filter in Lumar to only include pages with a specific URL path.

Breaking down Sitemaps and submitting them to Google Search Console is a great way to understand indexability issues across a large website better.

Generate the XML Sitemap

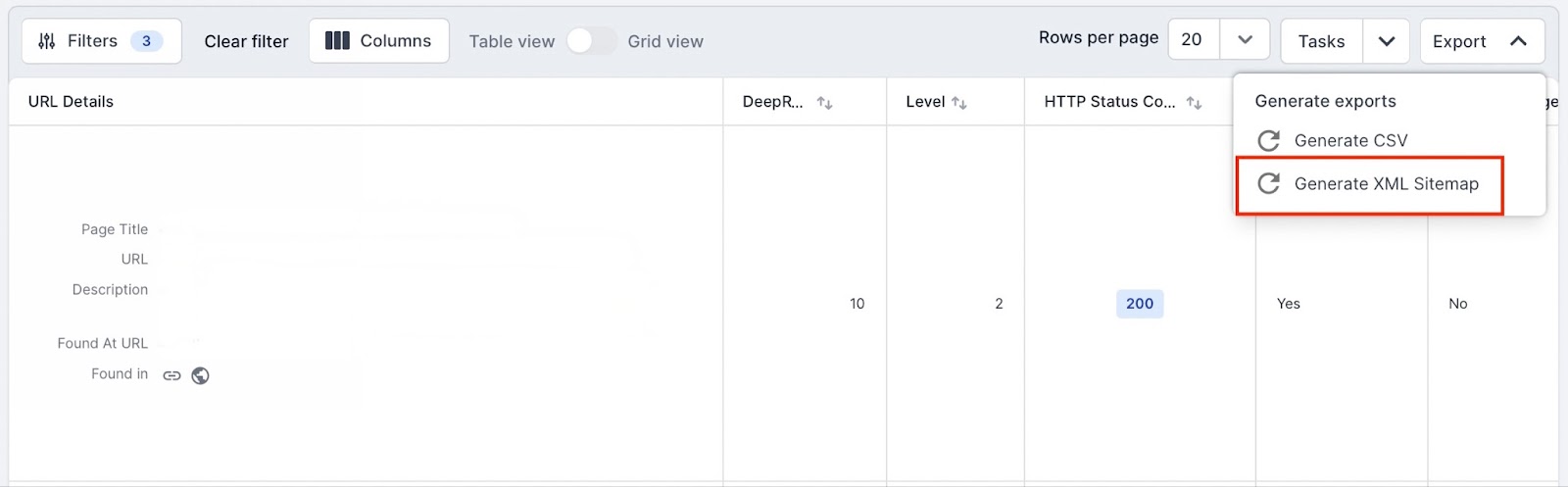

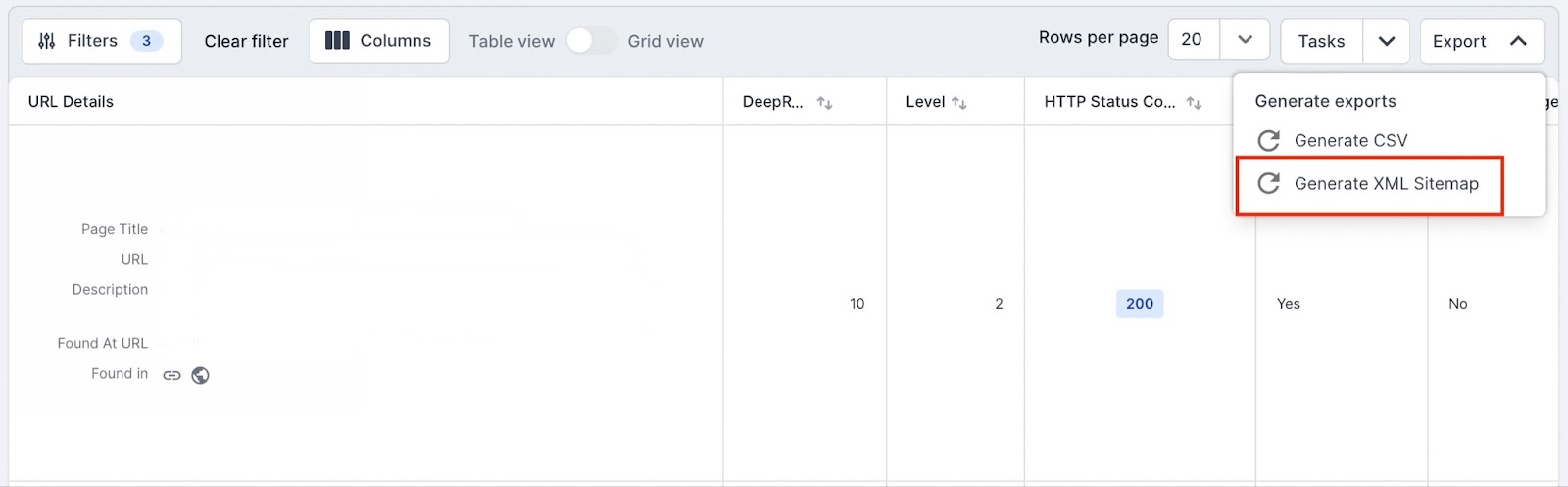

Once you have decided on what URLs to include in the XML Sitemap using the filter, click on the download icon on the right of the report.

This export drop down menu will show two options. Click on the “Generate XML Sitemap” option.

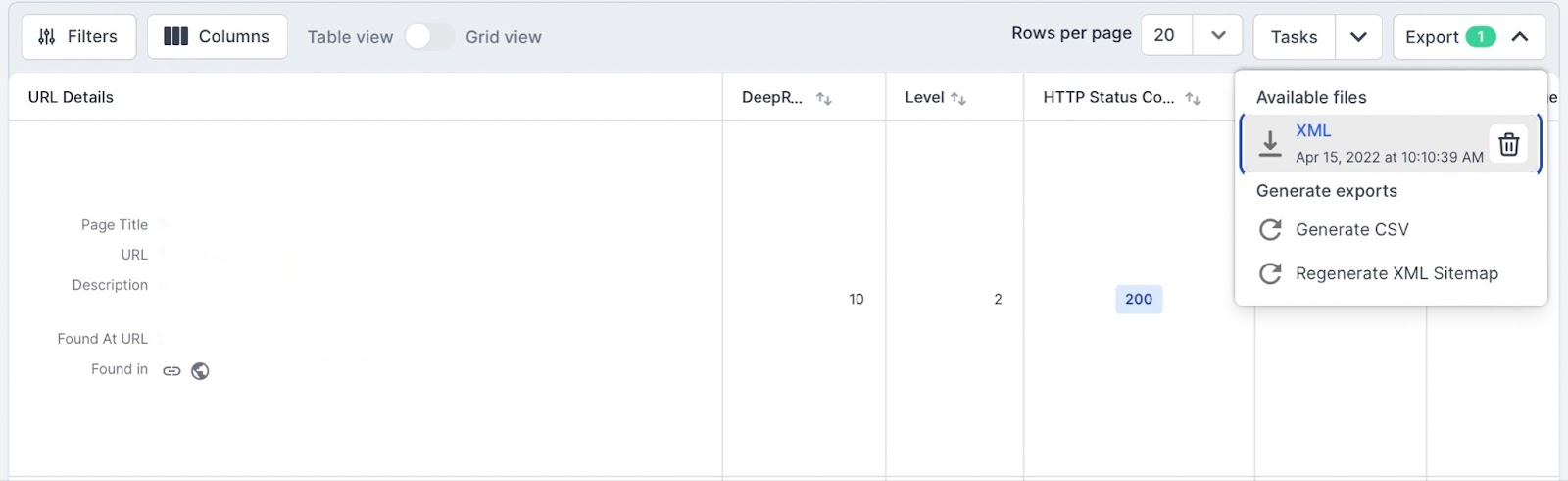

Once clicked you will see “Available Files” and the XML file loading and, when finished, you will see the downloadable file.

Generated Lumar Sitemap Format

Lumar generates an XML Sitemap using the Sitemap protocol standard. It only populates the required XML tags in the protocol:

- urlset

- url

- loc

At the moment Lumar cannot populate the lastmodified, changefreq or priority XML tags (these are optional and not required). It can also not generate news, image or video Sitemaps at this time. For now, Lumar can only be used to generate Sitemaps for HTML web pages.

Summary

Lumar can be used to generate XML Sitemaps with URLs from any of our 250+ reports. It is important to remember that for any generated Sitemap file to be valid it needs to follow the Sitemap protocol standard for file size limits (50,000 URLs or 50MB).