The importance of internationalization

In this guide to website internationalization, you’ll learn all about how to optimize websites that are multilingual and multiregional, to ensure that sites are indexed and served to the correct audiences in the correct languages. When websites go global and have many different language variations, international SEO is crucial for making sure that the right language versions of a website’s content are being shown in the relevant SERPs. For example, a person using Google in Spain is likely to want to use google.es, whereas a person in the UK will be more likely to use google.co.uk.

Another consideration for international SEO is that sites using multiple languages and locations to serve similar content can cause duplication issues. If this duplication is not handled correctly, it can lead to indexing problems, as you may end up with one version of a page being indexed for a single market, and the content written for another market may be seen as duplicate and, therefore, ignored. For example, if you have a page targeting the UK that is a duplicate of a page targeting the US, then only the US page may be indexed. If internationalisation is handled correctly though, and the US version were to rank well for a user in the UK, the US result would simply be swapped out in the SERP for the UK equivalent.

You’ll also see instances where multiple countries fall into a single market, such as a business with German content targeting users in Switzerland and Austria, as well as Germany. The issue here is that you would want the correct version of each website to show in their respective international search results so that users in each country would be able to see the correct currency, billing, and delivery information.

International site architecture

There are four main URL strategies for hosting different international versions of a website. Each has its own advantages and disadvantages, which we’ll go on to explain in this section of the guide.

The four main international web structure options are:

- Subdomains

- Subdirectories

- Separate domains or ccTLDs

- Parameter-based URL separation

Subdomains

A subdomain sits on a root domain, and is a part of this larger domain. Subdomains can be used to separate out different content versions, as Google sees them as separate entities.

This is an example of a subdomain:

fr.example.com

The pros of using subdomains

Here are the main benefits of using a subdomain:

- Can be configured using Google Search Console which allows you to easily control and manage geotargeting for Google.

- Can be configured using Bing Webmaster Tools which means that you can set and manage geotargeting for Bing.

- It is more convenient to set up geotargeting for specific subdomains as the subdomain and all of its subdirectories fall into the same geotarget.

- Allows the server to be physically hosted in multiple locations. This is a light signal used to determine the geotarget of a site, however, this signal is not as important now as it once was.

The cons of using subdomains

Here are the main issues you’ll face if you use a subdomain for structuring your international content:

- Users may not recognize the location targeted from the subdomain alone. They may question if the site is US based (e.g. ending with .com) but targeting German speakers.

- Not all link equity necessarily flows from the subdomain to the root domain.

Subdirectories

A subdirectory is a folder that sits under a root domain. These aren’t seen as separate entities and inherit signals from the rest of the main site.

This is an example of a subdirectory:

example.com/fr/

The pros of using subdirectories

These are the benefits of using subdirectories for structuring your international content:

- Easy to implement as these are simply additional folders on the main site.

- Can be configured using Google Search Console which allows you to easily control and manage geotargeting for subdirectories separately in Google.

- Can be configured using Bing Webmaster Tools which means that you can set and manage geotargeting for subdirectories separately in Bing.

- Lower maintenance as these run under a single host.

- Link equity typically has a higher effect when all websites are located on the same domain.

The cons of using subdirectories

Here are the negative impacts of using subdirectories for international web structure:

- Users may not notice the geotargeting difference as it can become visually buried within the URL. This is likely to be the case on smartphones, as mobile browsers do not display the full URL. Users may also not notice the targeting in the URL if they land on the wrong geo-directory.

Separate domains or ccTLDs

A country code top-level domain (ccTLD) shows search engines and users which country a website is registered to and is an extension found after the domain name. ccTLDs are seen as separate domains and target regions and countries but not languages.

This is an example of a ccTLD:

example.fr

The pros of using ccTLDs

Here are the main benefits of using a separate domain or ccTLD:

- ccTLDs are clearly apparent to users of the target market. This is important because in some markets a local domain is essential for gaining user trust.

- Server location becomes irrelevant as the ccTLD makes the targeted location clear to search engines.

The cons of using ccTLDs

Here are the negative aspects of using ccTLDs or separate domains to structure your international content:

- Geotargeting within Google Search Console is not possible for ccTLDs, as this is automatically assigned in most cases. However, some ccTLDs are considered international and can be targeted, such as .cc or .co.

- ccTLDs are more expensive to run and require more infrastructure as you need to host them all separately.

Consistent domains may not be available in all markets for each ccTLD you want. - You still need to maintain a URL strategy for language-specific content within a country, as geotargeting can’t be applied via Google Search Console, for example.

- There are often strict ccTLD requirements, such as being a registered company in several countries in order to buy their ccTLD.

- Some ccTLDs are not recognized as country-specific, such as .eu, .com, .asia and .org.

Parameter-based URL separation

URL parameters can be used to separate out language variations, and this can be done with a string at the end of a URL. These aren’t seen as separate entities.

This is an example of a language parameter:

example.com/?location=fr

The pros of using parameters

Here are the benefits of using parameters to separate out different language versions of content:

- Using parameters helps to consolidate link equity because you aren’t separating out language versions into separate entities with their own link profiles.

The cons of using parameters

These are the negative aspects of using language parameters:

- Geotargeting within Google Search Console and Bing Webmaster Tools is not possible.

- Parameters are prone to breaking and are often accidentally removed during redirection and paid promotion.

- Users may not notice the geotargeting difference as the parameter can become buried in the URL.

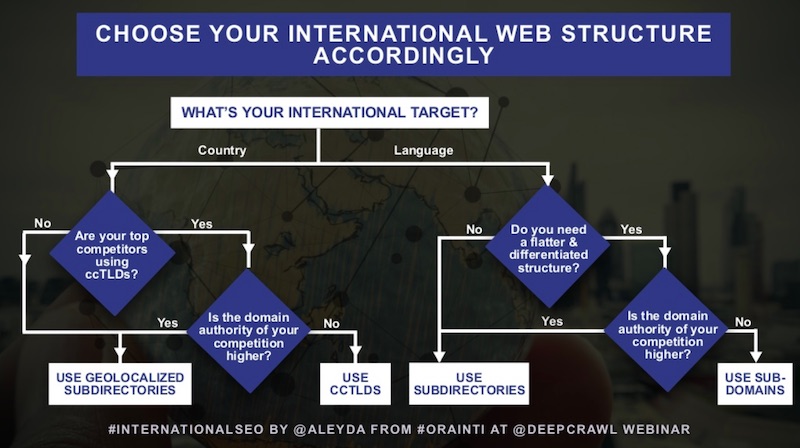

There are a few different considerations to make when deciding which international web structure would best suit your business, such as domain authority and the types of structures your competitors are using. International SEO expert, Aleyda Solis, has created the flowchart below to help make your decision easier. Make sure you take a look at the internationalisation webinar we held with Aleyda if you want to learn more.

How geographic targeting works in Google Search Console & Bing Webmaster Tools

In Google Search Console, geotargeting can be set up for country-specific subdomains with gTLDs (global top-level domains), and subdirectories with gTLDs. URL parameters are not possible to geotarget with.

Bing Webmaster Tools allows you to geotarget in the same way as Google at a domain, subdomain and directory level, but with the addition of page-level targeting. This allows you to set a different preferred language version for a single landing page which is set up to target that audience. This is Bing’s example of how page-level targeting can work:

A site in the Netherlands — hosted on the .nl ccTLD — can designate a single page to be targeted to a German audience, say https://www.example.nl/willkommen-deutsche-gaeste.html.

The problems with geographic redirection

It is possible for sites to use the IP address of a visitor to determine their location and redirect them to the specific subdirectory or subdomain to best suit them. However, this is strongly discouraged because always redirecting based on IP will redirect crawlers to where they are based. Googlebot mainly crawls from the US, so if you set up an automatic redirect based on IP, then Google will only ever be able to find and index your US content. If it is being redirected away from content for other markets, it won’t even know your other language variations exist.

This is why geo redirecting in general is poor practice and should generally not be implemented. If you identify Google under a USA IP address you should treat it the same as USA visitors, making other language options available to be explored.

Google doesn’t only crawl from the US, and is now crawling more sites from other country IP addresses. However, other search engines still crawl primarily from the countries in which they are based. Bing crawls from the US, Baidu crawls from China and Yandex crawls from Russia, so this is yet another reason to be mindful of geographic redirection. Don’t rely on cookies to tell if search engines have been redirected, as they are not able to save cookies.

Geographic redirection also causes problems for users because IP lookups and matching can be poor in some instances and can leave them stranded on incorrect sites. Further problems can arise where sites do not let users ‘leave’ the language section of the site that has been chosen for them. Any geo-redirection should be able to be overridden by the user.

This means that a better solution for guiding users to different language versions is to use soft prompts, such as a navigation element, JavaScript banner or an in-page notification suggesting that users can go to the local version of the website instead. Having a pop-up with a clear navigation to select other language versions which is preselected based on the perceived user or search engine location will have a similar but gentler effect than geographic redirection, and allows users and crawlers to access other aspects of the site.

Locale-aware crawling

Some websites have pages where the content adapts based on the perceived location or language of the visitor, which are called locale-adaptive pages. This method is used when having separate URLs for different language variations is not possible for a website.

Google will automatically detect local-adaptive pages and will configure its crawling in line with this. These are the ways in which Google will configure its crawling for these pages:

- Geo-distributed crawling: Where Googlebot would start to use IP addresses that appear to be coming from outside the USA, in addition to the current IP addresses that appear to be from the USA that Googlebot currently uses by default.

- Language-dependent crawling: Where Googlebot would start to crawl with an Accept-Language HTTP header in the request. This is for instances where sites using local-adaptive content are setting the Accept-Language HTTP request in the request header.

Bear in mind that other search engines, social networks and crawlers may not behave in this way, so solely relying on this is poor practice.

Since 2015, Google has had some support for crawling these locale-adaptive pages, however, it is still strongly recommended that different URLs or TLDs are used for content tailored to different countries or languages. Here’s a quote from Google on the subject:

These new configurations do not alter our recommendation to use separate URLs with rel=alternate hreflang annotations for each locale. We continue to support and recommend using separate URLs as they are still the best way for users to interact and share your content, and also to maximize indexing and better ranking of all variants of your content.

Learn more about international SEO with our comprehensive guide

If you want to learn more about internationalisation and achieving SEO success globally, make sure you read our comprehensive guide on the topic. Featuring advice from industry-leading experts on international site structure, hreflang, localisation and much more, this is not one to miss.

Read Our Ultimate Guide to International SEO