It’s possible to configure exactly which domains and subdomains will be included in your crawl.

This is useful if you wish to filter in/out known subdomains or if you only want to crawl a very specific area of your website.

Any URLs that are not defined within the crawl are treated as external and will not be crawled and will therefore not appear in your reports.

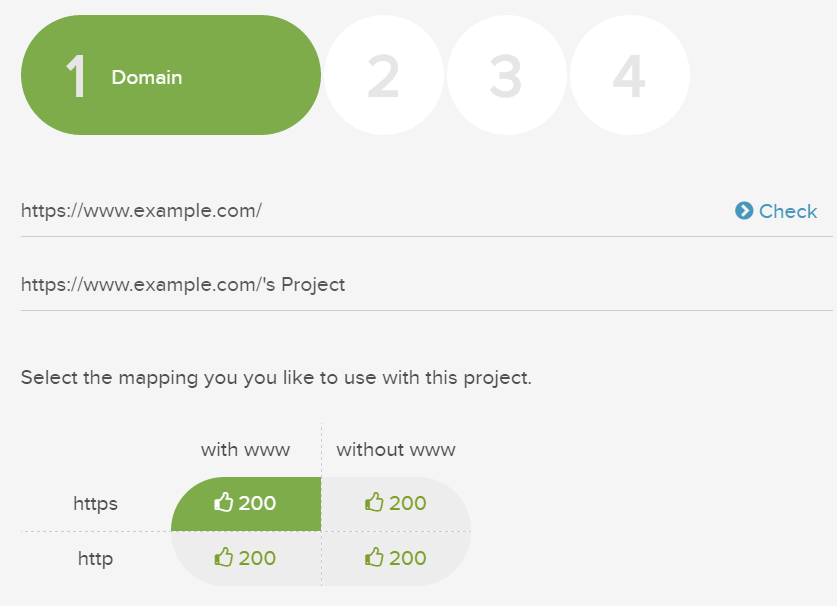

By default, the domain listed in step 1 of the crawl setup defines the domain scope and crawl start point.

Make sure to choose the correct HTTP/HTTPS version.

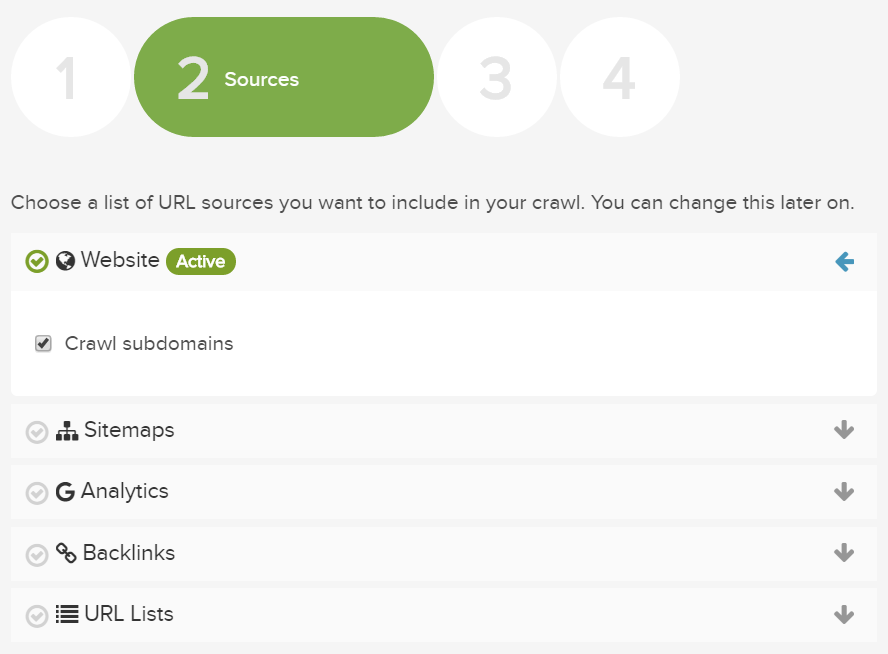

If you select the ‘Crawl subdomains’ option for Website crawl in step 2, Lumar will automatically include all URLs on a subdomain of your primary domain which are discovered during the crawl.

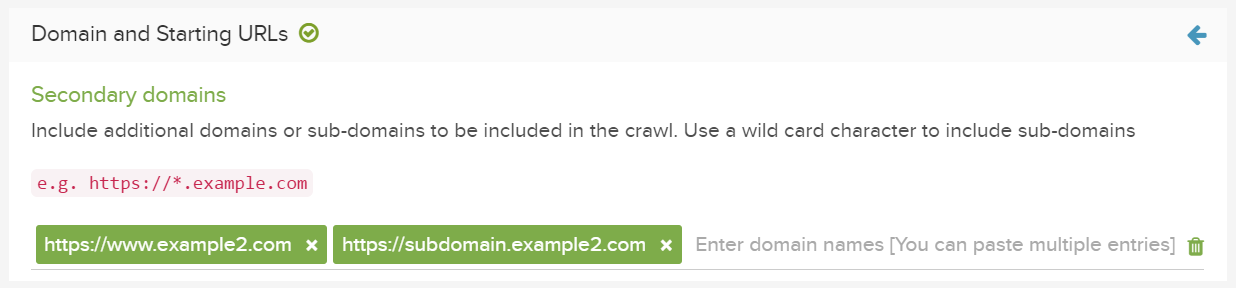

To include additional domains or subdomains in your crawl, add them to the ‘Secondary Domains’ section under Scope in Advanced Settings.

You can now enter as many domain or subdomains as you like.

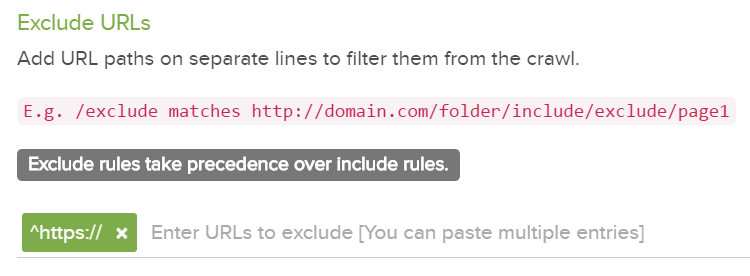

You can also use the ‘Included Only URLs’ and ‘Excluded URLs’ filters in Advanced Settings, to further restrict any domains or subdomains. e.g. Filter out all HTTPS versions of websites.

For further information on positive and negative URL restrictions in your crawl set up, check out the Restricting a Crawl guide.

If your setup is getting complicated, feel free to contact us if you need any help with these features.