Analyze, monitor, & protect your website’s technical health

Ready to drive more traffic, conversions, and revenue? Lumar is the platform of choice for enterprise organizations around the globe to identify issues preventing their sites reaching their full potential, including technical SEO, web accessibility, site speed, and even bespoke metrics to capture practically anything from the HTML of a web page.

See why leading brands choose Lumar to manage their websites’ technical SEO, site speed,

digital accessibility, and revenue-driving technical health — get a demo today

Empower cross-functional website teams with actionable, centralized insights

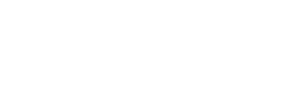

Build connected SEO & website health workflows

Your website has numerous stakeholders, SEOs, developers, accessibility teams, UX designers, digital marketers, and the C-suite. Keep them all aligned with Lumar—a single source of truth for everyone working on your website, with extensive metrics for technical SEO, website accessibility and more.

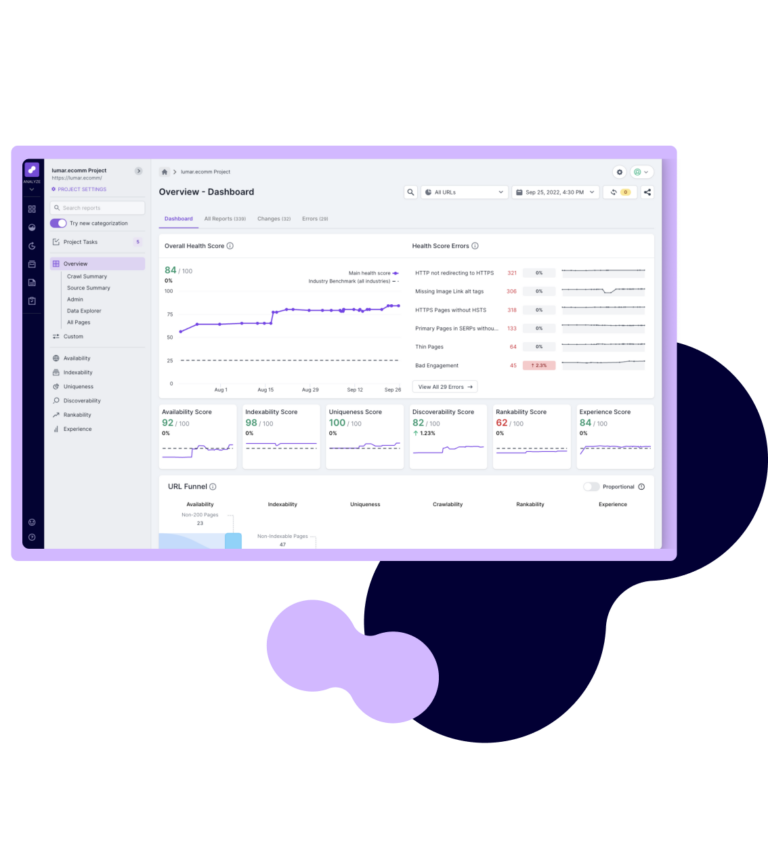

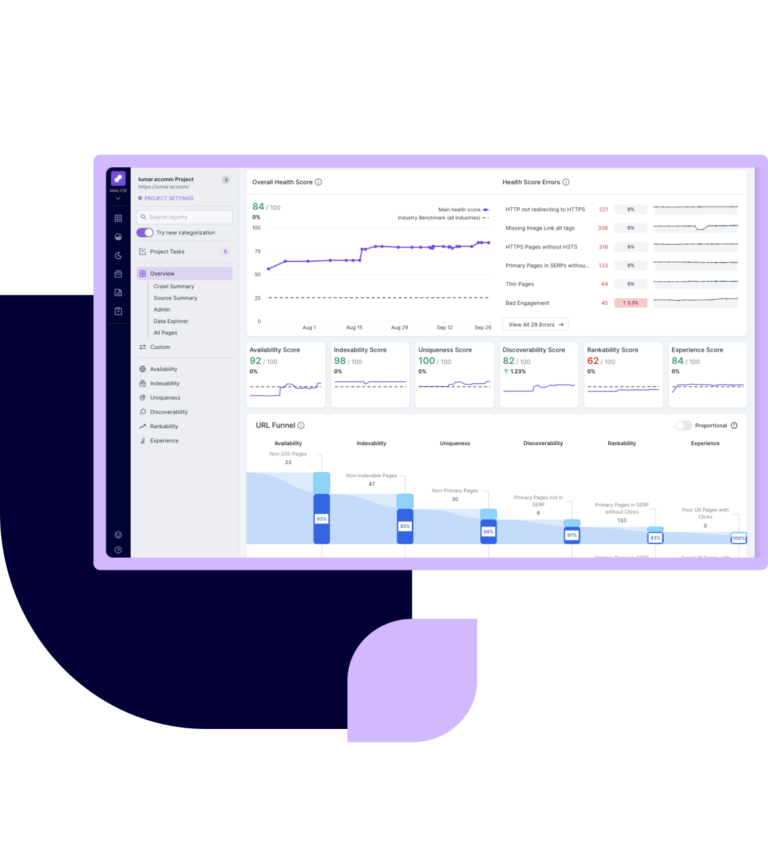

- Audit your domains and dive into analytics to fully understand the website technical health issues that are impeding your website’s success.

- Quickly prioritize website issues with dashboards, visualizations and health scores.

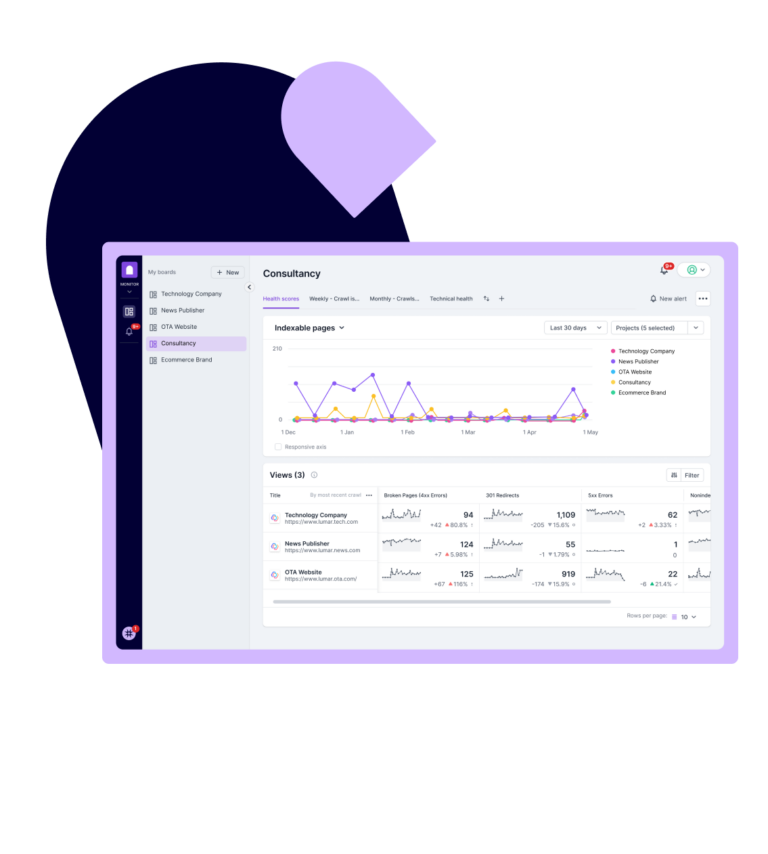

- Monitor all your website domains and key site sections in one place with customizable dashboards and alerts.

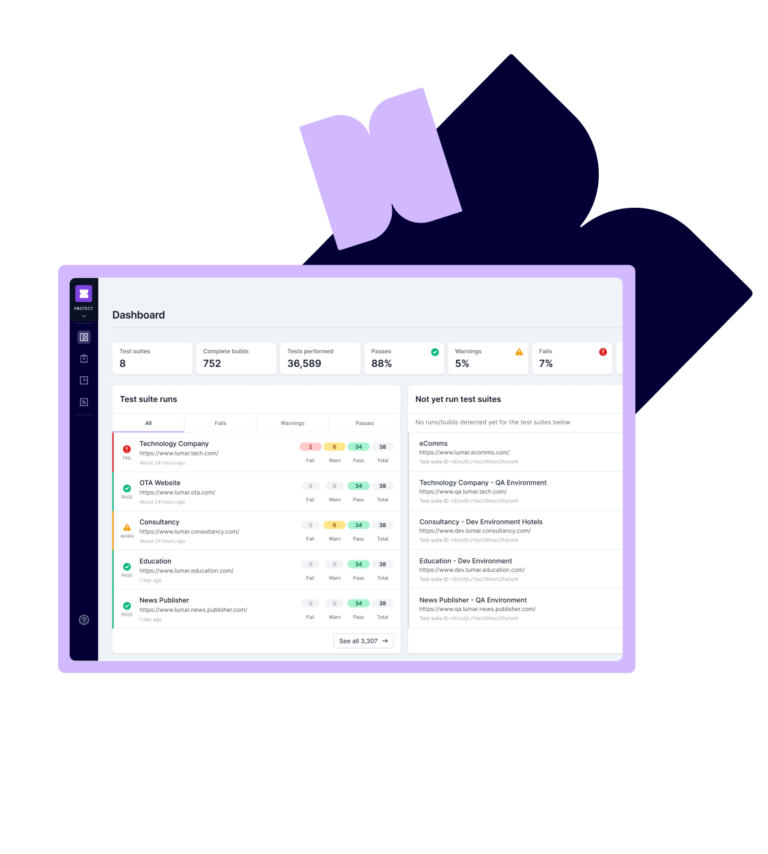

- Protect your site from new issues with template testing or fully automated QA testing.

- Easily track and communicate progress to stakeholders with an easy-to-understand view of website health.

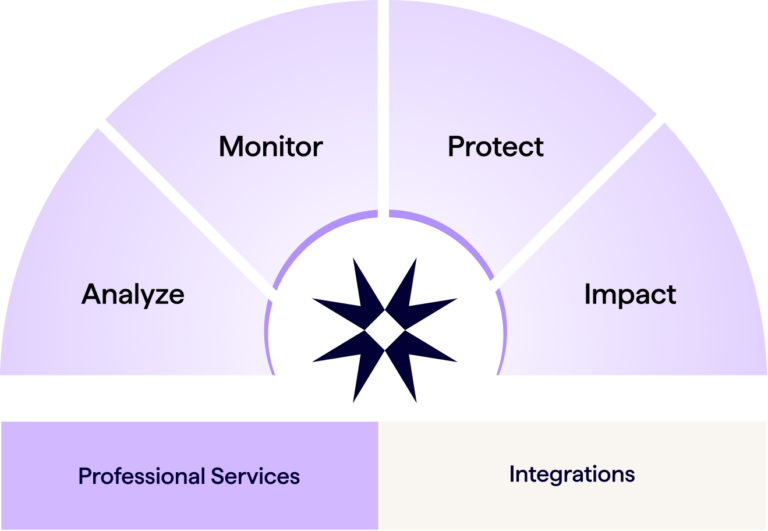

The fastest website crawler on the market.

Built for speed and scale. Lumar’s industry-renowned website crawler leverages best-in-class serverless architecture design so you can crawl as fast as your infrastructure allows (up to 450 URLs per second in testing).

With hundreds of reports across technical SEO, site speed and accessibility, plus opportunities for tailored analytics, Lumar gives you all the insights you need to boost your website’s performance, rankings, conversions and revenue.

Lear more about our crawlerEasily identify, prioritize, and fix technical issues impeding your site’s search performance.

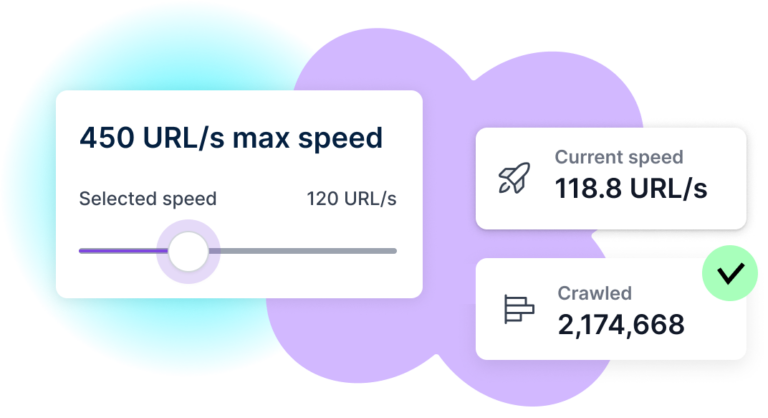

With more than 250 reports on key technical SEO issues, Lumar gives you a comprehensive view of the technical foundations of your site. Our traffic funnel and health scores eliminates data overload, so you can quickly spot opportunities for growth and easily communicate status and progress to key stakeholders. And with Lumar, you get complete flexibility over your crawl strategy, so you can crawl as little or as much of your site as you need.

Lumar for Technical SEO

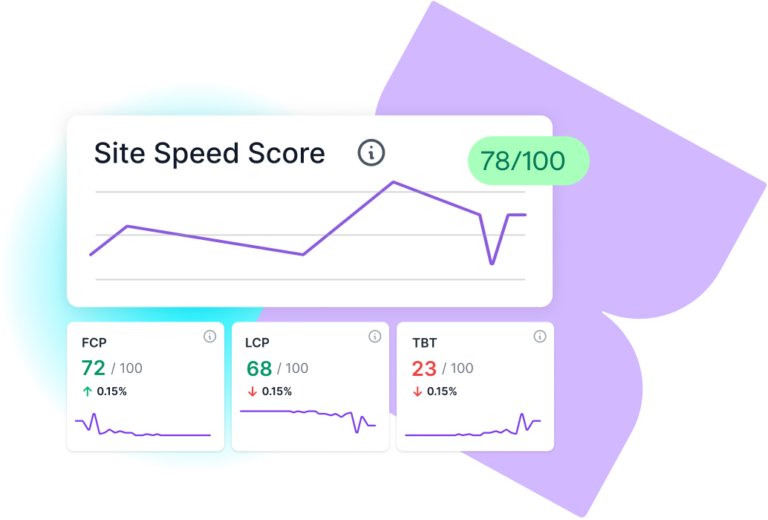

Improve performance and reduce costs by identifying and prioritizing speed issues at scale

Lumar gives you not only the analysis of what needs fixing, but critically the details you need to understand the issues and take action. Prioritize effectively with aggregated issues that help you identify the biggest opportunities to drive a high-performing, revenue-driving site that delivers a great experience.

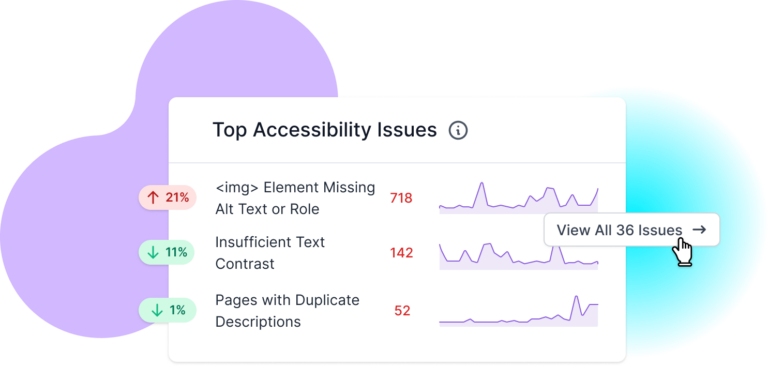

Lumar for Site SpeedCreate accessible online experiences & meet WCAG compliance standards

Lumar provides the digital accessibility insights you need to deliver a great experience for all users. Covering all automatable tests related to WCAG 2.2 A through to AAA, Lumar helps you easily identify accessibility issues, and save time with effective prioritization, fewer false positives on color contrast metrics, and automated a11y QA testing. And with our AI-generated suggested solutions, you can quickly understand not just what needs fixing, but also how to do it.

Lumar for Website Accessibility

The website intelligence you need to craft better digital experiences

It’s no secret: high-performing websites can drive enormous business growth.

But when it comes to realizing this potential, digital marketing leaders are often in the dark. Not anymore: Lumar is the lightbulb moment you’ve been waiting for. Monitor and benchmark your site’s technical health to improve SEO and accessibility compliance, drill down into the details with robust analytics, and discover new opportunities for revenue-driving organic growth.

How marketing teams use LumarTrusted by enterprise website teams around the globe

Lumar is ranked as a G2 leader in SEO software

Discover new opportunities for website-driven growth with Lumar

The technical foundation of your website plays a major role in how well your business performs in search engines — and how well it converts visitors into customers.

See your website’s technical health in a new light with Lumar.